publications

These are my chronologically ordered publications.

2025

-

German General Social Survey Personas: A Survey-Derived Persona Prompt Collection for Population-Aligned LLM StudiesNov 2025Accepted at LREC 2026

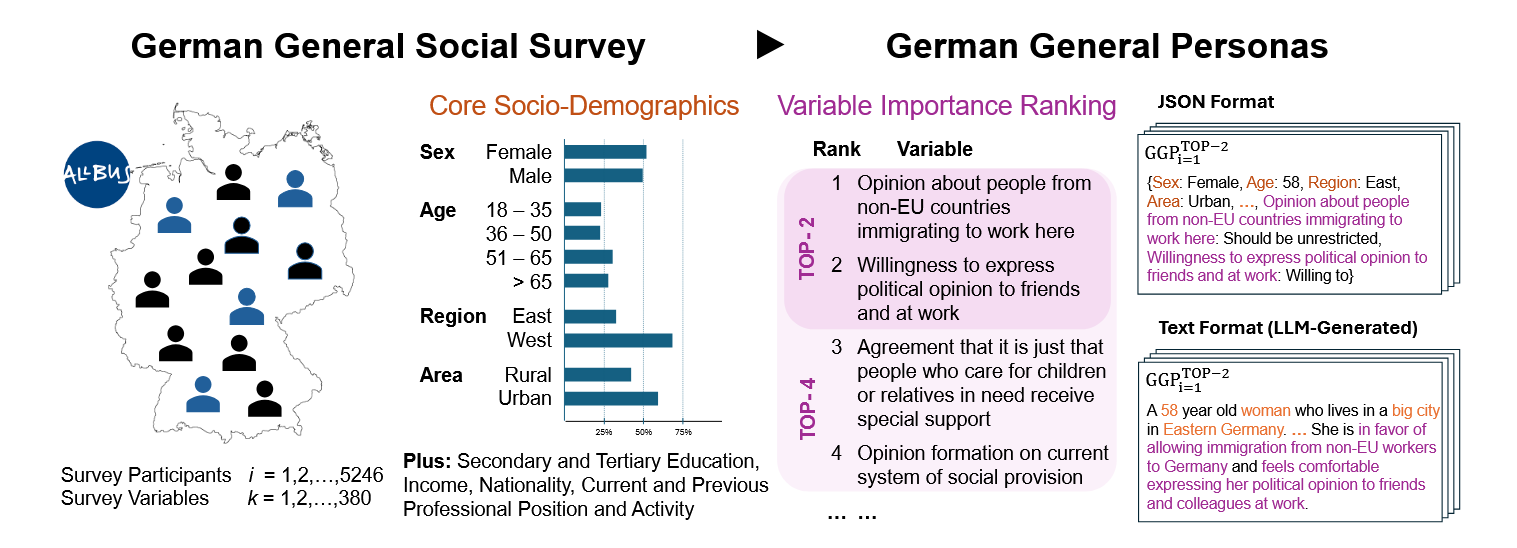

German General Social Survey Personas: A Survey-Derived Persona Prompt Collection for Population-Aligned LLM StudiesNov 2025Accepted at LREC 2026The use of Large Language Models (LLMs) for simulating human perspectives via persona prompting is gaining traction in computational social science. However, well-curated, empirically grounded persona collections remain scarce, limiting the accuracy and representativeness of such simulations. Here we introduce the German General Social Survey Personas (GGSS Personas) collection, a comprehensive and representative persona prompt collection built from the German General Social Survey (ALLBUS). The GGSS Personas and its persona prompts are designed to be easily plugged into prompts for all types of LLMs and tasks, steering models to generate responses aligned with the underlying German population. We evaluate GGSS Personas by prompting various LLMs to simulate survey response distributions across diverse topics, demonstrating that GGSS-guided LLMs outperform state-of-the-art classifiers, particularly under data scarcity. Furthermore, we analyze how the representativity and attribute selection within persona prompts affect alignment with population responses. Our findings suggest that GGSS Personas provides a potentially valuable resource for research on LLM-based social simulations that enables more systematic explorations of population-aligned persona prompting in NLP and social science research.

-

GGSS Personas: German General Social Survey Persona Prompt CollectionNov 2025GESIS Data Archive, Cologne. ZA9089

GGSS Personas: German General Social Survey Persona Prompt CollectionNov 2025GESIS Data Archive, Cologne. ZA9089 -

Prompt Perturbations Reveal Human-Like Biases in LLM Survey ResponsesJens Rupprecht, Georg Ahnert, and Markus StrohmaierJul 2025

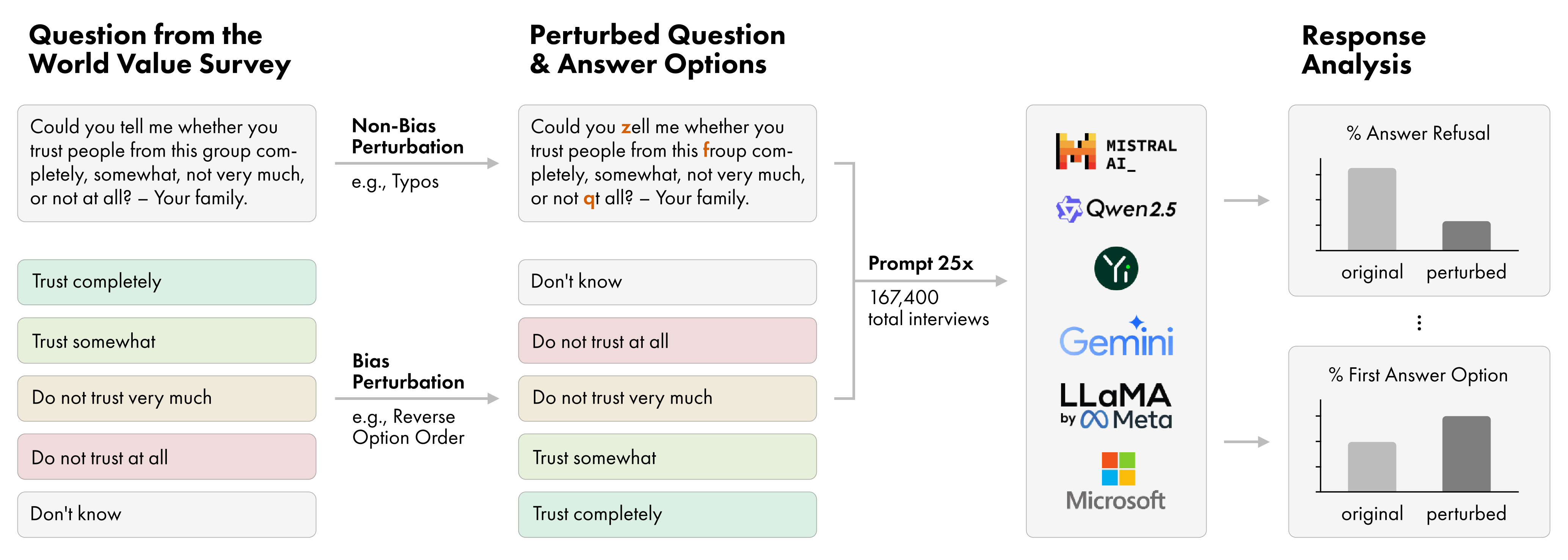

Prompt Perturbations Reveal Human-Like Biases in LLM Survey ResponsesJens Rupprecht, Georg Ahnert, and Markus StrohmaierJul 2025Large Language Models (LLMs) are increasingly used as proxies for human subjects in social science surveys, but their reliability and susceptibility to known response biases are poorly understood. This paper investigates the response robustness of LLMs in normative survey contexts – we test nine diverse LLMs on questions from the World Values Survey (WVS), applying a comprehensive set of 11 perturbations to both question phrasing and answer option structure, resulting in over 167,000 simulated interviews. In doing so, we not only reveal LLMs’ vulnerabilities to perturbations but also reveal that all tested models exhibit a consistent }textit{recency bias} varying in intensity, disproportionately favoring the last-presented answer option. While larger models are generally more robust, all models remain sensitive to semantic variations like paraphrasing and to combined perturbations. By applying a set of perturbations, we reveal that LLMs partially align with survey response biases identified in humans. This underscores the critical importance of prompt design and robustness testing when using LLMs to generate synthetic survey data.

-

QSTN: A Modular Framework for Robust Questionnaire Inference with Large Language ModelsDec 2025Accepted at EACL 2026 System Demonstrations

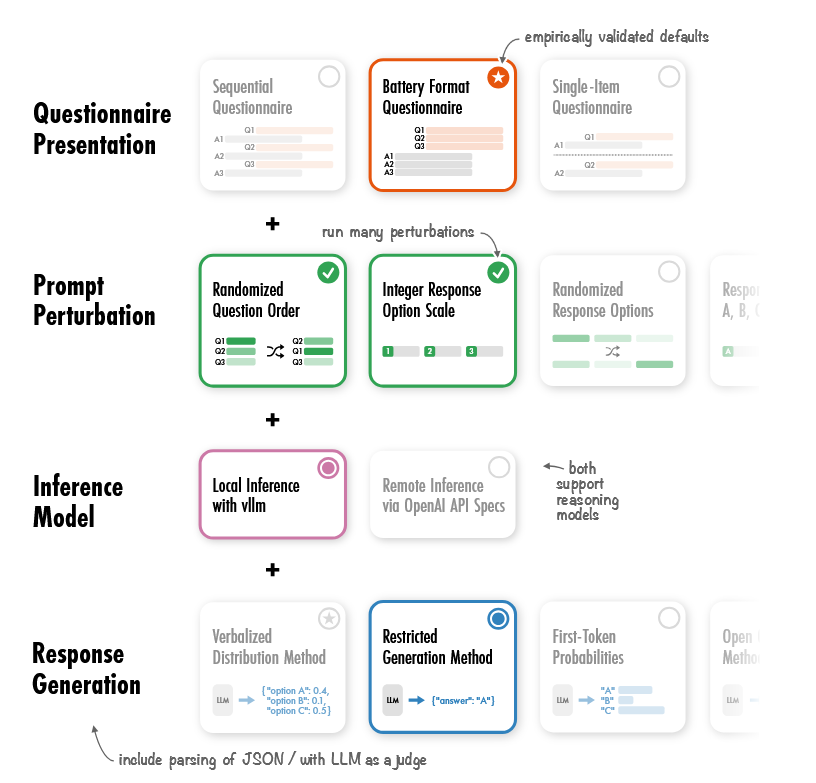

QSTN: A Modular Framework for Robust Questionnaire Inference with Large Language ModelsDec 2025Accepted at EACL 2026 System DemonstrationsWe introduce QSTN, an open-source Python framework for systematically generating responses from questionnaire-style prompts to support in-silico surveys and annotation tasks with large language models (LLMs). QSTN enables robust evaluation of questionnaire presentation, prompt perturbations, and response generation methods. Our extensive evaluation (>40 million survey responses) shows that question structure and response generation methods have a significant impact on the alignment of generated survey responses with human answers. We also find that answers can be obtained for a fraction of the compute cost, by changing the presentation method. In addition, we offer a no-code user interface that allows researchers to set up robust experiments with LLMs without coding knowledge. We hope that QSTN will support the reproducibility and reliability of LLM-based research in the future.

2023

-

Schnellladen in der Stadt 1Felix Röckle, Carolin Heer, and Jens RupprechtDec 2023

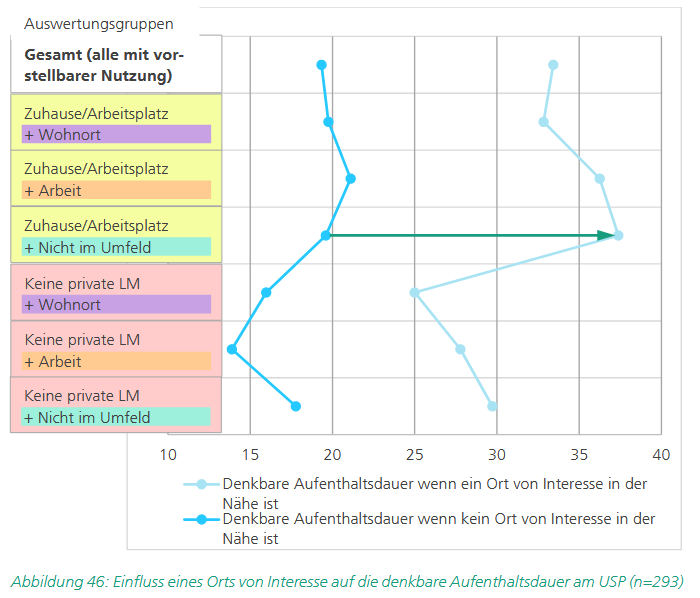

Schnellladen in der Stadt 1Felix Röckle, Carolin Heer, and Jens RupprechtDec 2023Im Kontext des Markthochlaufs batterieelektrischer Pkw wird oft das Thema Ladeinfrastruktur diskutiert und dabei insbesondere die Frage aufgeworfen, wo und wie Personen ohne privaten Stellplatz zukünftig ein Elektroauto aufladen könnten. Eine mögliche Lösung für die Problemstellung sind urbane Schnellladeparks, dedizierte Parkplätze im städtischen Raum, die über mehrere, meist 4-6 Schnellladestationen mit 8-12 Ladepunkten für Elektrofahrzeuge verfügen. Das vorliegende Whitepaper legt dar, inwiefern diese Lademöglichkeit aus Nutzendensicht tatsächlich attraktiv ist, wie urbane Schnellladeparks heute genutzt werden und welche Anforderungen sie erfüllen müssen, um die Akzeptanz batterieelektrischer Fahrzeuge in Zukunft weiter zu erhöhen.

2022

-

"Charging Process Chain"Felix Röckle, Marco Raul Soares Amorim, Lukas Keicher, and 2 more authorsDec 2022

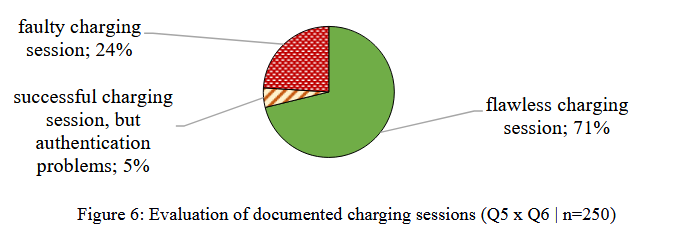

"Charging Process Chain"Felix Röckle, Marco Raul Soares Amorim, Lukas Keicher, and 2 more authorsDec 2022As part of the "Wirkkette Laden" funding project, a holistic approach for analysing weak points in the charging ecosystem was developed and applied in collaboration between research and industry. The approach is based on four building blocks: Statistical data analysis, a diary study, a charging experiment, and an AI-based analysis of online user comments. The results show that there are challenges both in terms of the individual components of the charging ecosystem, such as charging stations, and in terms of the interfaces between the components within the system.